DeepSeek AI on Friday unveiled DeepSeek-V4 Pro and Flash, its highly anticipated flagship open-source models, which it claims match world-class generative AI systems on capability and offer a 1-million-token context window at a fraction of the cost.

Released on Hugging Face as a pair of open-sourced Mixture-of-Experts (MoE) LLM models that deliver frontier-level performance at a dramatically lower cost, DeepSeek-V4 Pro and Flash models were launched as preview versions with fully open weights under MIT license. The V4 models’ advanced capabilities have put it next to Gen AI models like ChatGPT 5.4, Claude Opus 4.6 and Gemini 3.1 Pro, claimed DeepSeek.

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.

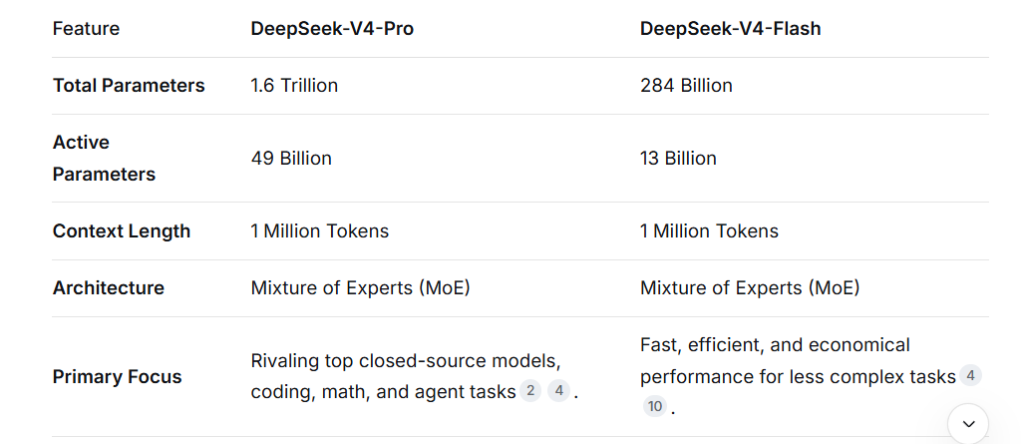

🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world’s top closed-source models.

🔹 DeepSeek-V4-Flash: 284B total / 13B active params.… pic.twitter.com/n1AgwMIymu— DeepSeek (@deepseek_ai) April 24, 2026

DeepSeek-V4 Pro has 1.6 trillion total/parameters/49B active per token and SOTA open-source for agentic coding that tops open-source AI models in MATHS/STEM/Coding/Reasoning while its V4 Flash model has 284B total parameters /13B per token built at a highly cost-effective API pricing,

DeepSeek AI has claimed that the release of its V4 models with native 1M token window will set a new “standard configuration” for all Gen AI models.

Token window (or token window context) is the maximum amount of information, measured in tokens, an LLM model can process, analyze, remember and use at one time while generating a response.

For context, an AI model with 1 million token context window would be able to process tens and thousands of files for entire codebases, analyze years-long legal/medical/official records, engage in extremely long multi-turn conversations among other detailed tasks.

While DeepSeek-V4 models offer the same capabilities as other world class Gen AI models, it’s the highly effective pricing that could be the game changer in the AI industry.

For DeepSeek-V4 Flash, cache-hit input tokens are priced at $0.028 per million, cache-miss inputs at $0.14 per million and output tokens are $0.28 per million. For flagship DeepSeek-V4 Pro model, the cache-hit inputs cost $0.145 per million, cache-miss inputs at $1.74 per million and outputs $3.48 per million.

DeepSeek’s V4 launch from China comes in the wake of heightened tensions between US and China in the global AI dominance race, as the former has accused China based companies of profiteering from American made Gen AI models, in the name of open-source. Meanwhile, China has accused the U.S. of gatekeeping artificial intelligence technology through sanctions, supply chokeholds and institution boycotts.

Founded in 2023, DeepSeekAI first gained global attention in January 2025 with the release of their open-source flagship reasoning model DeepSeek-R1, built at a fraction of cost but matched world class GenAI models. DeepSeek R1 launch triggered a “Sputnik” moment for the tech industry, wiping billions from the U.S. tech stocks overnight.

Known for its mixture-of-experts (MoE) architecture, DeepSeek AI emphasizes on open source/open design LLM models but has come under criticism from its counterparts in the U.S. for engaging in distillation of their models.

Also Read: What is Knowledge Distillation?

Whether DeepSeek’s benchmark claims hold up under independent scrutiny remains to be seen, but the cost-to-capability ratio is the story Silicon Valley will be watching this weekend. At roughly one-twentieth the price of comparable closed-source models, V4 makes the premium charged by frontier labs harder to justify with every release and its repercussions on the U.S. tech industry will be known soon.

Also Read: Open Source AI Models: US Sees Threat, China says Fair Deal