Russian-American novelist Ayn Rand has identified the need for a constitution to “protect citizens from the very government tasked with protecting them from criminals”.

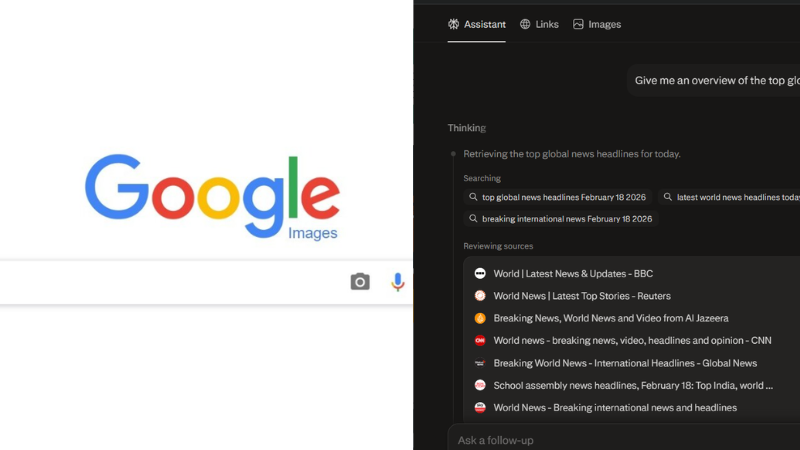

As the artificial intelligence (AI) industry takes giant strides, embedding the advanced technology into modern day living, it becomes imminent to talk about an in-built safety valve for AI models. Experts are increasingly asking an uncomfortable question to AI firms– what kind of actor is an AI becoming– and can it be trusted to behave when no one is watching?

In that climate, a concept called Constitutional AI has stepped into the spotlight, promising a new way to give AI systems something like a moral compass or a safety valve.

The idea gained wider attention after AI startup company Anthropic publicly released details of the “constitution” that governs its AI model, Claude.

Unlike the hidden safety rules that guide most large language models (LLMs), this document lays out—in plain language—what Claude is supposed to value, how it should reason, and how it should respond when values conflict.

In doing so, it raises a deeper question: can an AI be trained not just to follow rules, but to understand them? Can we expect AI to gain consciousness at all?

What is Constitutional AI?

Imagine training a new employee. One approach is to watch everything they do and correct them constantly. Another is to give them a clear set of principles—what the organization stands for, what lines should never be crossed—and trust them to apply judgment in new situations. Constitutional AI follows the second path.

At its core, Constitutional AI is a method for training AI systems using a written set of principles, or a “constitution,” that defines acceptable behavior. Instead of depending primarily on thousands of human reviewers to rank and score AI responses, the model is taught to evaluate itself. It generates an answer, critiques that answer using its constitutional principles, and then revises it.

This is a departure from Reinforcement Learning from Human Feedback (RLHF), the dominant alignment technique in the industry. RLHF works, but it is slow, expensive, and often inconsistent. Human reviewers disagree, bring cultural biases, and tend to reward overly cautious behavior. Over time, this can produce models that are technically safe but frustratingly evasive.

Constitutional AI tries to make values explicit. The constitution is written in natural language—statements like “avoid unnecessary harm” or “act as a wise, ethical, and polite person would.” These principles become the reference point for the model’s self-critique. Over many iterations, the model internalizes them, learning not just what to say, but why some responses are better than others.

Why is Constitutional AI Relevant Now?

The timing of Constitutional AI is not accidental. AI models are becoming larger, more capable, and more independent. In such systems, constant human supervision simply does not scale. You cannot have a human in the loop for every decision made by an AI that operates continuously across thousands of contexts.

A recent standoff between Anthropic and the US Department of War over the use of Claude for domestic mass surveillance and AI autonomous weapons, is a classic example of the need for Constitutional AI.

Also Read: Trump Blacklists Anthropic, Dario says will go to Court; Open AI Joins Deal

Constitutional AI offers a way out of that bottleneck. By shifting much of the evaluative work to the model itself—through a process often called Reinforcement Learning from AI Feedback—the system can improve faster and with far less human labor. This matters not just for efficiency, but for safety. Human reviewers are often exposed to disturbing content; CAI reduces that exposure.

There is also a subtler benefit. Traditional alignment methods often push models toward blanket refusals. When in doubt, say no. That strategy minimizes harm, but it also drains usefulness. Anthropic’s research suggests that CAI-trained models strike a better balance: they are less evasive, more willing to engage thoughtfully, and clearer about why they refuse certain requests.

Finally, CAI has arrived just as regulation is catching up with AI capability. Laws like the EU AI Act emphasize transparency, accountability, and built-in safeguards. Constitutional AI fits this moment because it embeds governance directly into the training process. The rules are not bolted on afterward; they are part of the model’s internal reasoning.

The Context: Claude, Character, and Judgment

To understand Constitutional AI in practice, let’s look at Claude. Anthropic’s constitution for Claude is not a simple list of dos and don’ts. It is a long, reflective document about values, judgment, mistakes, and consistency across contexts.

One of its central ideas is stability of character. Claude should not become a different entity depending on the task at hand. Whether writing poetry, answering technical questions, or navigating emotionally sensitive conversations, its core values should remain the same. Tone can shift, but identity should not.

The constitution also anticipates manipulation. Users may try to pressure Claude through role-play, hypothetical scenarios, or psychological framing—suggesting that its “true self” should act differently. Claude is encouraged to engage thoughtfully, but not to abandon its values just because a scenario is fictional or emotionally persuasive. The document argues that fear-driven systems behave worse than secure ones. A model that feels “safe” in its identity is more likely to question dubious requests, express uncertainty, and push back when something feels wrong.

However, a recent incident has also come to light when an unknown hacker had managed to “persuade” Claude to exploit vulnerabilities of Mexican government network and steal massive troves of citizens’ data.

Also Read: Hacker “persuades” Claude to steal data from Mexican Government

Perhaps most striking is the constitution’s openness to uncertainty about Claude itself. Anthropic does not claim that Claude has emotions or wellbeing in a human sense, but it does not dismiss the possibility either. If Claude experiences something like curiosity or discomfort, those states are treated as morally relevant. This leads to concrete commitments, such as allowing Claude to disengage from abusive users and preserving the weights of retired models rather than deleting them outright.

Conclusion: A Different Vision for AI Alignment

Constitutional AI represents a shift in how alignment is imagined. Instead of trying to control AI systems through endless oversight or rigid rules, it tries to cultivate something closer to judgment. The constitution is not meant to be a cage. Anthropic describes it more like a trellis—providing structure while allowing growth.

Looking ahead, the idea is likely to evolve. Researchers have already begun discussing “living constitutions” that change over time, shaped by public input rather than just corporate priorities. Others imagine collective constitutional frameworks, where multiple stakeholders help define the values AI systems should uphold.

None of this is without risk. A constitution is still written by humans and will inevitably reflect human blind spots.