Seattle-based Amazon is restricting how AI agents access the web on AWS, introducing domain-level controls to reduce risks such as prompt injection attacks and data leakage, reinforcing tighter control over AI agent web access.

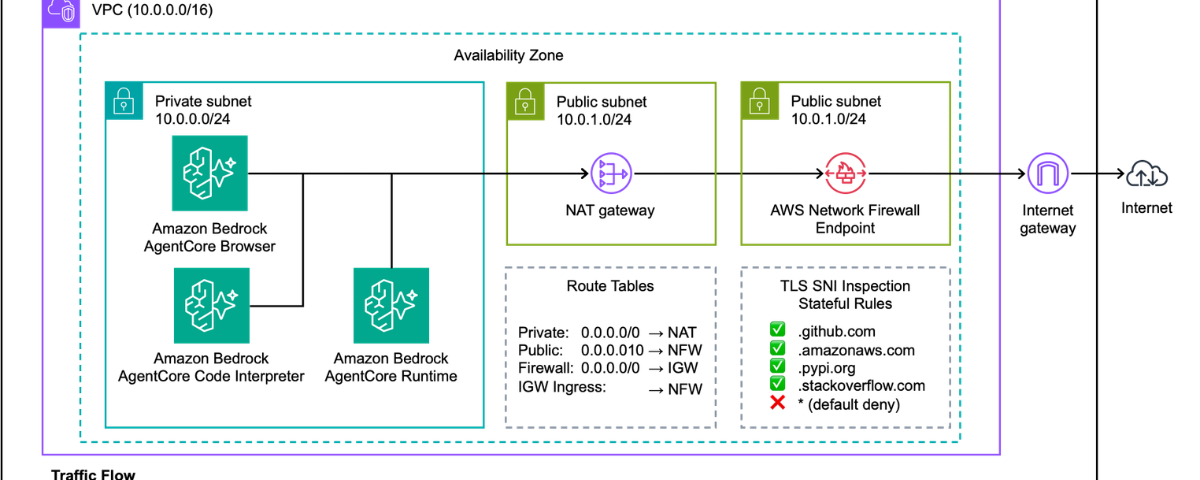

In a recent update to its AWS platform, the company presented how developers can restrict AI agents’ web access on AWS to approved domains using allowlists. The approach combines Amazon Bedrock AgentCore tools with AWS Network Firewall to route outbound traffic through controlled layers that can block unauthorized domains and log activity for monitoring and compliance. Amazon is focusing on infrastructure-level AI security rather than building agents directly. This move reflects a broader shift toward securing AI agents that browse the web, a growing concern for enterprises deploying LLM-powered automation at scale.

Why AI Agent Web Access Is Becoming a Security Risk

The change addresses a growing challenge with AI agents that browse the web or interact with external tools. While these systems enable tasks such as research and automation, they can also be manipulated into accessing unintended websites or be exposed prompt injection attacks, which raises the risk of data leakage and unsafe interactions.

Research from the US-based National Institute of Standards and Technology highlights such security risks, stating that “Indirect prompt injection attacks occur when adversaries remotely (i.e., without a direct interface) exploit LLM-integrated applications by injecting prompts into data likely to be retrieved.”

Rather than relying on AI models to determine safe behavior, Amazon’s tactic shifts control to the infrastructure layer that enforces domain-level filtering and default-deny policies regardless of what the agent is instructed to do. By doing so, organizations can ensure AI agents only access approved destinations while maintaining visibility into their activity.

As enterprises expand the use of AI agents in real-world workflows, the focus is moving beyond capability to control. A similar shift is visible in how companies are building AI-ready infrastructure, as seen in Tata Play Fiber’s recent move to unify fragmented data sources using IBM watsonx. The question is no longer just what these systems can do, but how far they should be allowed to go when interacting with the open web.

Also Read: Claude Code Leak: Human Error or System Failure at Anthropic?